Bhavna Gupta: When Not to Use AI in Clinical Practice

Bhavna Gupta, AI Implementation Consultant, Anesthesiologist and Associate Professor at AIIMS Rishikesh, shared a post on LinkedIn:

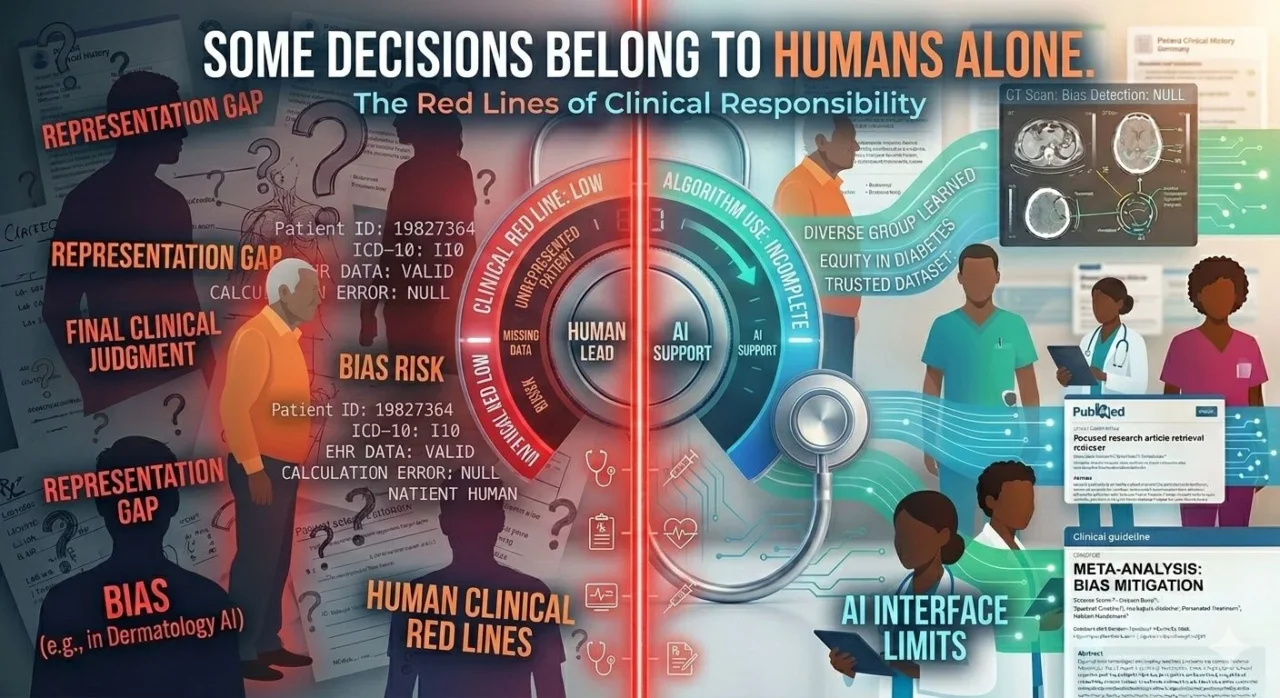

“When not to use AI – the clinical red lines.

A junior doctor asked last week:

‘Can I use AI to help decide ICU triage during a mass casualty?’

My answer was immediate:

‘No.’

Not ‘be careful.’

Not ‘with caution.’

No.

Because some decisions are too important to delegate.

Even partially

Here’s what I’ve learned:

AI can assist thinking.

It cannot replace accountability.

And there are lines – clear, non-negotiable lines-

Where AI should not cross.

Red Line 1: Life-or-death decisions

Do not use AI to:

Decide who gets ICU admission

Determine code status recommendations

Choose between treatment options when outcome is uncertain

Why?

These require moral judgment.

Context you can see but can’t quantify.

Things AI cannot process.

A patient’s wishes. Family dynamics. Quality of life considerations.

These are human decisions.

Red Line 2: Dosing critical medications

Do not use AI to calculate:

Vasopressor doses

Anticoagulation adjustments

Chemotherapy protocols

Insulin sliding scales

Why?

We covered this earlier – AI is terrible at math.

And when the math involves life-sustaining drugs-

Your brain. Not the algorithm.

Red Line 3: Interpreting imaging without verification

Do not use AI to:

Make final radiology diagnoses

Clear trauma scans alone

Determine surgical necessity from images

Why?

AI can miss subtle findings.

Or hallucinate findings that aren’t there.

You need human eyes on critical images.

Red Line 4: Replacing informed consent discussions

Do not use AI to:

Explain procedure risks to patients

Generate consent forms without review

Answer patient questions about surgery

Why?

Consent requires nuance. Reading the room.

Understanding what the patient actually understands.

AI can’t do that.

Red Line 5: Making diagnoses in high-stakes presentations

Do not use AI to:

Diagnose chest pain in the ED

Interpret sepsis workups

Evaluate acute neuro changes

Why?

AI gives probabilities based on patterns.

You see the patient in front of you.

Their color. Their distress. The things that don’t fit the pattern.

That’s diagnostic gold AI doesn’t have.

The framework:

Ask yourself:

‘If this goes wrong, can I defend using AI?’

If the answer involves:

‘Well, the AI said…’

Don’t use AI

These require accountability only humans can give.

The rule I follow:

If a lawyer, ethics committee, or grieving family asks:

‘Why did you make that decision?’

And your answer includes:

‘The AI suggested…’

You crossed a line.

Here’s the truth:

AI is a tool.

Like a stethoscope. Like a lab value.

It informs. It doesn’t decide.

When you use it-

You’re still the one accountable.

Your name. Your license. Your judgment.

So use it where it helps.

Refuse it where it replaces.

The line isn’t always clear.

But when you’re unsure-

Ask yourself:

‘Would I be comfortable explaining this decision to this patient’s family?’

If the answer involves hiding AI’s role-

Don’t use it.”

Stay updated with Hemostasis Today.

-

May 12, 2026, 16:46Tagreed Alkaltham: Why Apheresis Matters in Modern Transfusion Medicine

-

May 12, 2026, 16:37Reinhold Kreutz: Cardiovascular Burden in Acute Intermittent Porphyria Needs Greater Awareness

-

May 12, 2026, 16:33Pablo Corral: The Truth About Very Low LDL-Cholesterol

-

May 12, 2026, 16:24Mildred Lundgren: We Must Talk About the Invisible Causes of Stroke

-

May 12, 2026, 16:17Irene Scala: The Sex Disparities In Access to Acute Stroke Treatments In Italy

-

May 12, 2026, 16:04May Nour: UCLA Health Mobile Stroke Unit Becomes The 1st In The World to Perform mCTA In the Field

-

May 12, 2026, 15:57Leonardo Roever: Prognostic Impact of Lipoprotein(a) and CAR in Elderly Acute Ischemic Stroke Patients

-

May 12, 2026, 15:54Bruno Pougault: Prioritizing Laboratory Tests in Resource-Limited Emergency Care

-

May 12, 2026, 15:37Jennifer Holter Chakrabarty: Supporting the Next Generation of Hematology Researchers